TL;DR:

- A structured creative testing process helps identify effective ad variations quickly and accurately.

- Test only one variable at a time and ensure proper tracking to get reliable results.

- Run tests for at least 7 to 14 days with sufficient impressions and conversions before drawing conclusions.

Burning through ad budget on creatives that never connect is one of the most common frustrations in performance marketing. You launch a campaign, watch the spend climb, and wonder if the problem is the image, the headline, the audience, or all three. The good news: you don't have to guess. A structured creative testing process gives you real answers, faster. This guide walks you through exactly what you need to set up, how to run tests that actually mean something, how to read the results with confidence, and how to avoid the mistakes that quietly sabotage most testing efforts.

Table of Contents

- What you need before you start testing ad creatives

- Step-by-step guide: Executing ad creative tests

- Verifying results: Interpreting data and measuring success

- Troubleshooting: Avoiding common mistakes in ad creative testing

- Our take: What most guides miss about testing ad creatives

- Ready to upgrade your ad creative testing?

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Proper setup matters | Clear goals, solid tracking, and defined audiences are must-haves before running tests. |

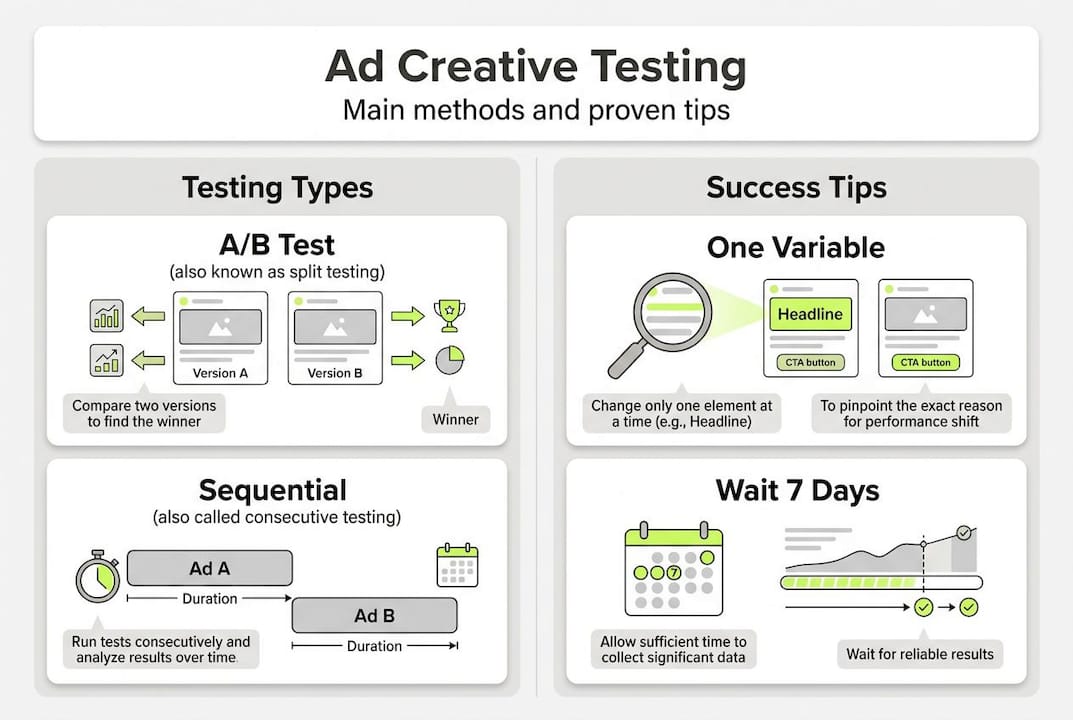

| Choose the right test type | Match your method (A/B, multivariate, sequential) to budget, time, and goals for meaningful results. |

| Verify before acting | Wait for adequate impressions and conversions to reach significance and trust your data. |

| Avoid common pitfalls | Limit test changes, watch for platform quirks, and refresh creative to prevent ad fatigue. |

| Iterate with hypotheses | Always test with a goal in mind and learn from every result for smarter campaigns. |

What you need before you start testing ad creatives

Skipping the setup phase is the fastest way to waste a testing budget. Before you run a single experiment, make sure your foundation is solid. That means having the right tools, clean tracking, and a clear goal for what you're trying to learn.

Here's what you need in place before launching any test:

- Creative variants: At minimum two versions of your ad, each with one distinct difference (headline, image, CTA, or format)

- Tracking pixels: Properly installed and firing on all relevant conversion events across Meta and Google

- Platform access: Admin or advertiser-level access to your ad accounts with experiment features enabled

- Baseline data: At least two to four weeks of existing campaign performance to benchmark against

- Defined audience: Locked audience segments so you're not testing creative and targeting simultaneously

- Budget allocation: A minimum daily budget that allows each variant to gather meaningful data without starving

| Testing method | Tools needed | Minimum budget | Time to results |

|---|---|---|---|

| A/B test | Platform native tools | $20 to $50/day per variant | 7 to 14 days |

| Multivariate | Analytics platform, larger creative library | $100+/day | 14 to 30 days |

| Sequential testing | Campaign scheduling tools | Flexible | 2 to 6 weeks |

| Pre-launch audience test | Survey or concept testing tool | Low | 3 to 7 days |

Core testing methodologies include pre-launch audience testing, in-platform A/B testing, sequential approaches, and multivariate frameworks, each suited to different budgets and goals. Choosing the right method upfront saves time and money. If you're still developing your winning ad creatives, finalize those assets before testing begins.

Pro Tip: Never launch a test without first verifying that your pixel is firing correctly on every conversion event. A broken tracking setup means your results are fiction, not data.

Step-by-step guide: Executing ad creative tests

Once your setup is confirmed, it's time to run the test. The steps below follow a standard A/B framework, which is the most reliable starting point for most SMBs.

- Form a hypothesis. Don't just change things randomly. State what you expect and why. Example: "A testimonial-based image will outperform a product-only image because our audience responds to social proof."

- Build your variants. Create exactly two versions, changing only one element. Keep everything else identical, including copy, CTA, audience, and budget.

- Set up the experiment in-platform. Use Meta's A/B Test tool or Google's campaign experiment feature. Both allow you to split spend evenly and track results independently.

- Define your success metric. Decide before launching whether you're optimizing for CTR, CPA, or ROAS. Don't change this mid-test.

- Launch and monitor passively. Resist the urge to make changes. Let the algorithm stabilize and data accumulate.

- Evaluate at the right time. Google Ads testing recommends running tests 7 to 14 days and reaching statistical significance before drawing conclusions.

- Document and act. Record what you tested, what won, and why. Apply the winner and build your next hypothesis from the learnings.

| Test type | Speed | Accuracy | Complexity | Best for |

|---|---|---|---|---|

| A/B | Fast | High | Low | Single variable isolation |

| Multivariate | Slow | Very high | High | Multiple elements at once |

| Sequential | Medium | Medium | Medium | Budget-limited accounts |

For a deeper look at how A/B testing in ads works across platforms, the mechanics are worth understanding before you scale.

Pro Tip: Isolate one variable per A/B test, every time. Testing two elements simultaneously makes it impossible to know which change drove the result.

Verifying results: Interpreting data and measuring success

Running a test is only half the work. Knowing when and how to read the results is what separates confident decisions from expensive guesses.

Here are the key metrics to track across Meta and Google campaigns:

- CTR (click-through rate): Measures how compelling your creative is at driving action

- CPA (cost per acquisition): Tells you what each conversion costs, critical for budget efficiency

- ROAS (return on ad spend): The ultimate revenue-level measure of creative performance

- Hook rate (video): The percentage of viewers who watch past the first three seconds

- Thruplay rate (video): Measures how many viewers complete or watch 15+ seconds of your ad

Three ways to confirm a winner with confidence:

- The winning variant shows consistent improvement across at least two primary metrics, not just one

- Results hold steady over multiple days, not just a single spike

- The difference is large enough to be meaningful, not just a rounding error

Statistical significance is non-negotiable. Significance requires at least 1,000 impressions, 10 to 30 conversions per variant, and a minimum of 7 to 14 days of runtime before you can trust the outcome.

"Wait until you have at least 1,000 impressions and 10 to 30 conversions per variant before calling a winner. Patience here protects your budget downstream."

Rushing to a conclusion after 48 hours is one of the most expensive habits in paid media. Platforms need time to exit the learning phase and distribute spend fairly. If you're also tracking Google ads ROI at the campaign level, align your test metrics with your broader performance goals so the data tells a consistent story.

Troubleshooting: Avoiding common mistakes in ad creative testing

Even well-intentioned tests go wrong. Knowing the failure modes in advance puts you ahead of most advertisers.

Common testing pitfalls include attribution differences between platforms, creative fatigue setting in faster than expected, and misaligned test audiences that skew results before you even begin.

Here are the critical mistakes to avoid:

- Testing too many variables at once: Makes it impossible to identify the cause of any performance shift

- Ending tests too early: Premature decisions based on incomplete data lead to false winners

- Ignoring platform learning periods: Meta and Google both have learning phases where delivery is unstable; results during this window are unreliable

- Mismatched audiences across variants: Even small audience differences can invalidate your results

- Neglecting creative fatigue: Audiences tune out the same creative quickly, especially on Meta. Ad campaign ROI drops noticeably when fatigue sets in

Attribution differences between platforms are also worth flagging. Meta and Google often report conversions differently due to view-through vs. click-through attribution windows. Always compare results within the same platform before drawing cross-channel conclusions. For a closer look at how Meta ads strategies handle attribution, it's worth reviewing before you set up your reporting.

Pro Tip: Refresh your ad creatives every 2 to 4 weeks. Frequency-driven fatigue is one of the quietest budget drains in paid media, and regular creative rotation keeps your learnings current and your CPAs stable.

Our take: What most guides miss about testing ad creatives

Here's the uncomfortable truth we've seen play out with SMB brands repeatedly: most teams test for the sake of testing. They swap a button color, change a font, or rotate a new image, then call it a creative test. That's not testing. That's guessing with extra steps.

The brands that actually improve performance over time build a hypothesis first. They ask: why would this change move the needle? The answer has to connect to something real about the audience, the offer, or the channel behavior.

We also push back on the idea that faster is better. Platforms market their quick-test features aggressively, but shorter tests on smaller budgets often produce noise, not signal. A two-week test with 500 conversions per variant will teach you more than six rushed three-day tests combined.

For SMBs especially, the highest-leverage move is improving your core concept and hook, not just swapping visuals. If the fundamental message isn't resonating, no amount of creative polish will fix it. Learn to test ad copy smarter and you'll compound those learnings across every campaign you run.

Ready to upgrade your ad creative testing?

If this guide gave you clarity on the process, imagine what a dedicated team running these tests for you could do for your results. At A&T Digital Agency, we build and manage performance-driven ad systems for SMBs across Google and Meta, from creative strategy through execution and ongoing optimization. We don't just test randomly. We build hypotheses, run structured experiments, and scale what works.

Our Google ads management and Meta ads management services are built around exactly the kind of data-driven creative testing this article covers. If you're ready to stop guessing and start scaling, we're ready to help.

Frequently asked questions

How many ad variants should I test at once?

Test no more than 2 to 4 creatives per ad set to avoid spreading budget too thin. A/B testing methodology recommends limited variants to keep results reliable and actionable.

How long should a creative test run?

Let tests run 7 to 14 days minimum. Statistical significance requires at least 1,000 impressions and 10 to 30 conversions per variant before you can trust the outcome.

What metrics matter most when evaluating ad creative?

Prioritize CTR, CPA, and ROAS for most campaigns. For video, also track hook rate and thruplay rate. Platform guidance recommends aligning your primary metric with your campaign objective before the test begins.

How often should I refresh ad creatives?

Refresh creatives every 2 to 4 weeks. Creative fatigue sets in quickly on high-frequency platforms like Meta, and stale ads drive up costs while dragging down performance.